Across organizations, AI slipped into HR through quiet product updates, reshaping who gets hired, promoted, or managed out long before most leaders realized what had changed. This article looks at how unvalidated AI features embedded in common HR tools create governance and compliance risks, why CHROs are being held accountable for systems they didn’t choose, and what real AI oversight must look like in 2026 for Canadian employers.

AI is already in your HR stack. That’s the problem.

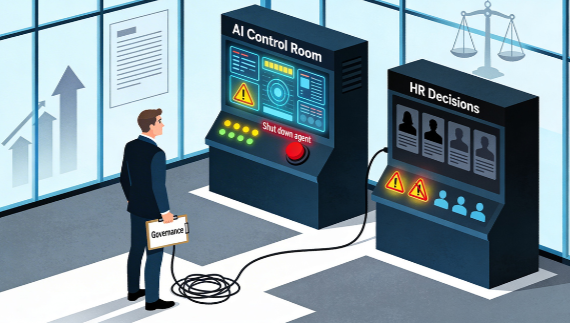

If firms like KPMG are publishing guidance on emergency stop and control mechanisms for AI agents, what does that tell you about the tools you quietly switched on in HR without a second thought?

Let’s be honest about how most of this showed up.

In many Canadian organizations, AI didn’t arrive as a big, debated decision. It crept in as a feature release inside tools people were already using.

Your ATS added an “AI screening” toggle. Your HCM added “AI talent matching.” A point solution promised “AI scoring” for interviews or “AI nudges” for performance.

You didn’t sit down and ask, “Are we comfortable letting an opaque model influence who gets hired, promoted, or managed out?” It just appeared in the product update notes.

And if you’re using newer wraparound tools that bolt AI onto LinkedIn, your ATS, or your inbox, you can be almost certain that, in many cases, they haven’t gone through rigorous independent validation, lack publicly available bias audits, and provide no replicable documentation that explains how the system actually works.

With many off-the-shelf tools, you don’t know what data trained the model, how that data was handled, how performance was measured, or how the system acts with people who are different from the training set.

What you do get is marketing language: debiased, validated, trusted AI, ethical by design. What you don’t get, in most cases, is a bias audit you could hand to a regulator, reproduce internally, or confidently stand behind.

Now layer on governance.

Risk, IT, and big consulting firms are creating ‘trusted AI’ frameworks with things like hard stop controls, monitoring, and risk committees. This work is important. If your systems can move money, change code, or affect infrastructure, you need clear controls.

But here’s the gap.

You might have a kill switch for an AI agent that affects infrastructure, but no similar control for the AI that quietly influences hiring, promotions, or exits.

And this is why I’m not buying: “Don’t worry, there’s a human in the loop.”

Research on hiring and automation bias has shown that when algorithmic recommendations are presented, humans often defer to them, even when those recommendations reflect bias. In one recent hiring experiment, when participants saw biased recommendations from an AI screener, they tended to mirror that bias; when the AI was removed, their decisions were more balanced and relied more on their own judgment.

Put simply, once the tool gives an answer, we tend to trust it, even if it’s wrong.

So if your safety model is “we’ll put a human in the loop, and they’ll catch the issues,” you don’t have a safety model. You have wishful thinking.

Here’s another important fact: In Beamery’s 2025 workplace AI survey, HR was described as ‘often sidelined’ in AI transformation, with CEOs, CIOs and digital leads driving most AI decisions, and CHROs named as key decision-makers only a small fraction of the time.

This is the reality in many organizations today:

- The tools are opaque and under‑validated

- HR didn’t choose them and can’t fully explain how they work

- Humans tend to trust whatever the system surfaces

- Regulators and courts increasingly expect replicable explanations and bias audits

- And HR is increasingly being asked to answer for outcomes it did not design and cannot fully audit

If you’re a CHRO or senior HR leader, this isn’t a call to panic about AI. It’s a call to panic about governance.

The real questions now are:

- Which HR tools we use today contain embedded AI, and exactly where do they shape hiring, promotion, performance, or exits?

- For each tool, can we see in writing how the model was trained, what data it uses, and how bias and performance were tested?

- Do we have independent bias audits we could hand to a regulator or human‑rights tribunal and confidently defend?

- Who in the organization has the authority to say no to a vendor or switch off a use case if we can’t answer those questions?

If any function is going to own the people side of this, it has to be HR: setting what’s fair, what has to be transparent to employees, where humans stay accountable, and when the right move is to say “no” to a tool or a use case until the governance catches up.

If you can’t answer them, AI isn’t your competitive edge. It’s unmanaged risk, already embedded in your employment decisions, and HR is being asked to own the consequences without owning the system.

This isn’t an AI problem. It’s a governance failure.

#AIinHR #AIGovernance #CHRO